After discussing about how MS SQL Server can help us to format MS SQL data in JSON format and how to read JSON data in MS SQL Server, in this article we will quickly pass over some additional functions, tips and tricks and possibilities handling JSON data in MS SQL Server.

JSON_VALUE

JSON_VALUE is one of the two functions with the purpose to extract values from JSON text. The second one is JSON_QUERY. While JSON_QUERY is used to extract a JSON fragment or to get a complex value type (array or object), JSON_VALUE is used to extract a primitive data type value (null, string, number or Boolean).

Syntax:

JSON_VALUE (expression, path)

- expression - an expression. Typically, the name of a variable or a column that contains JSON text

- path - a JSON path that specifies the property to extract

The function returns a single text value of type NVARCHAR(4000). The collation of the returned value is the same as the collation of the input expression. If the value is greater than 4000 characters:

- in lax mode - returns null

- in strict mode - returns an error

We can use the JSON_VALUE in any part of the statement (SELECT, FROM, WHERE). You can also use it in computed columns and we will see an example of this later in this article.

Let’s try to extract some values from a JSON document.

Example:

DECLARE @JSON_data NVARCHAR(MAX) = N'{

"Name": "John Doe",

"BlogURL": "http:\\www.microsoft.com",

"Born": 1979,

"Spouse":null,

"BornAfterWoodstock": true,

"FavoriteColors": ["Red", "Purple", "Green"]

}';

SELECT

JSON_VALUE(@JSON_data, '$.Name') AS Name

, JSON_VALUE(@JSON_data, '$.BornAfterWoodstock') AS BornAfterWoodstock

, JSON_VALUE(@JSON_data, '$.FavoriteColors') AS FavoriteColors

, JSON_VALUE(@JSON_data, '$.FavoriteColors[1]') AS SecondColor

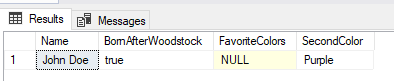

As you can see all the values are strings (NVARCHAR(4000)). We were able to extract the second color from the list of favorite colors but unable to return the whole list (object) “FavoriteColors” because JSON_VALUE understand only primitive data types. The result for the “FavoriteColors” node is NULL.

If we try to use the strict mode in the same select as before the result will be an exception. The reason for that is because in lax mode (not strict) the error is suppressed if we try to select the value for a complex data type.

Example:

SELECT

JSON_VALUE(@JSON_data, 'strict $.Name') AS Name

, JSON_VALUE(@JSON_data, 'strict $.BornAfterWoodstock') AS BornAfterWoodstock

, JSON_VALUE(@JSON_data, 'strict $.FavoriteColors') AS FavoriteColors

, JSON_VALUE(@JSON_data, 'strict $.FavoriteColors[1]') AS SecondColor

"Msg 13623, Level 16, State 1, Line 9

Scalar value cannot be found in the specified JSON path."

Since the result of the JSON_VALUE is a NVARCHAR(4000) field let’s see what happens when we try to select JSON values larger than that.

Example:

DECLARE @LargeJSON NVARCHAR(MAX) = CONCAT('{"data":"', REPLICATE('0',4096), '",}')

SELECT

JSON_VALUE(@LargeJSON, '$.data') AS LargeData;

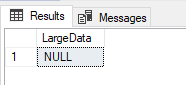

The result is NULL. This is because we didn't use the strict mode and the error is suppressed. Let’s see what happens when using the strict mode.

Example:

DECLARE @LargeJSON NVARCHAR(MAX) = CONCAT('{"data":"', REPLICATE('0',4096), '",}')

SELECT

JSON_VALUE(@LargeJSON, 'strict $.data') AS LargeData;

"Msg 13625, Level 16, State 1, Line 2

String value in the specified JSON path would be truncated."

JSON_QUERY

The second function to extract values from a JSON text is JSON_QUERY.

Syntax:

JSON_QUERY (expression, path)

- expression - an expression. Typically, the name of a variable or a column that contains JSON text

- path - a JSON path that specifies the object or the array to extract

The function returns a JSON fragment of type NVARCHAR(4000). The collation of the returned value is the same as the collation of the input expression. If the value is not an object or an array:

- in lax mode - returns null

- in strict mode - returns an error

Let’s try to extract some values from a JSON document.

Example:

DECLARE @JSON_data NVARCHAR(MAX) = N'{

"Name": "John Doe",

"BlogURL": "http:\\www.microsoft.com",

"Born": 1979,

"Spouse":null,

"BornAfterWoodstock": true,

"FavoriteColors": ["Red", "Purple", "Green"]

}';

SELECT

JSON_QUERY(@JSON_data, '$.Name') AS Name

, JSON_QUERY(@JSON_data, '$.BornAfterWoodstock') AS BornAfterWoodstock

, JSON_QUERY(@JSON_data, '$.FavoriteColors') AS FavoriteColors

, JSON_QUERY(@JSON_data, '$.FavoriteColors[1]') AS SecondColor

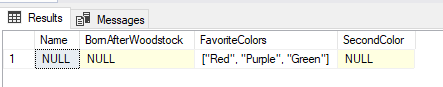

Complementary to JSON_VALUE all the primitive data types are returned as NULL. Only the complex value (array) is returned in the result.

Let’s test JSON_QUERY using the strict option.

Example:

DECLARE @JSON_data NVARCHAR(MAX) = N'{

"Name": "John Doe",

"BlogURL": "http:\\www.microsoft.com",

"Born": 1979,

"Spouse":null,

"BornAfterWoodstock": true,

"FavoriteColors": ["Red", "Purple", "Green"]

}';

SELECT

JSON_QUERY(@JSON_data, 'strict $.Name') AS Name

, JSON_QUERY(@JSON_data, 'strict $.BornAfterWoodstock') AS BornAfterWoodstock

, JSON_QUERY(@JSON_data, 'strict $.FavoriteColors') AS FavoriteColors

, JSON_QUERY(@JSON_data, 'strict $.FavoriteColors[1]') AS SecondColor

"Msg 13624, Level 16, State 1, Line 9

Object or array cannot be found in the specified JSON path."

ISJSON

ISJSON is a simple function to validate if the input string contains a valid JSON document.

Syntax:

ISJSON (expression)

- expression - The string to test

The function returns:

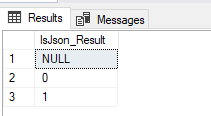

- 1 - if the string contains valid JSON

- 0 - if the string is not a valid JSON

- NULL - if expression is NULL

Let's see a quick example.

Example:

SELECT ISJSON(NULL) AS IsJson_Result

UNION

SELECT ISJSON(N'"Name": "John Doe"') AS IsJson_Result

UNION

SELECT ISJSON(N'{

"Name": "John Doe",

"URL": "http:\/\/www.microsoft.com"

,"Meetups":["New SQL 2016/2017 functions","SQL & JSON"]}') AS IsJson_Result

If we are planning to store JSON data in a database table, we could use ISJSON to create a check constraint to assure that only valid JSON data is inserted in the database. Let’s see one example for this.

Example:

USE WideWorldImporters;

GO

DROP TABLE IF EXISTS dbo.TestUserSettings;

GO

CREATE TABLE dbo.TestUserSettings(

[Key] NVARCHAR(256) NOT NULL,

App_Settings NVARCHAR(MAX) NULL CONSTRAINT CK_user_settings CHECK (ISJSON(App_Settings) = 1)

);

GO

INSERT INTO dbo.TestUserSettings ([Key], App_Settings) VALUES ('key1', NULL);

INSERT INTO dbo.TestUserSettings ([Key], App_Settings) VALUES ('key1', N'"Name": "John Doe"');

INSERT INTO dbo.TestUserSettings ([Key], App_Settings) VALUES ('key1', N'{

"Name": "John Doe",

"URL": "http:\/\/www.microsoft.com"

,"Meetups":["New SQL 2016/2017 functions","SQL & JSON"]}');

GO

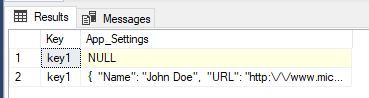

SELECT * FROM dbo.TestUserSettings;

GO

DROP TABLE IF EXISTS dbo.TestUserSettings;

GO

In the example we first created a test table named dbo.TestUserSettings. It consist of two columns and on the second column (App_Settings) a check constraint was defined using ISJSON function. After that we try to insert 3 rows:

- Inserting NULL value passed because the column App_Settings is defined as nullable

- Inserting an invalid JSON failed because of the check constraint defined. Also, an exception has been raised: “Msg 547, Level 16, State 0, Line 11; The INSERT statement conflicted with the CHECK constraint "CK_user_settings". The conflict occurred in database "WideWorldImporters", table "dbo.TestUserSettings", column 'App_Settings'.”

- Inserting a valid JSON passed

Selecting all rows from the test table we can see that it contains only 2 rows (NULL and the one containing a valid JSON).

At the end we removed the test table.

Import JSON data from a file

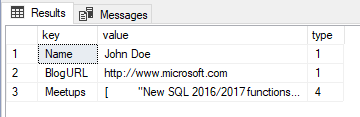

You can directly read JSON data from a file located on a disk location accessible from MS SQL Server. The process is a simple implementation of a OPENROWSET bulk load from a data file combined with a CROSS APPLY with the OPENJSON function.

Example:

SELECT [key], [value], [type]

FROM OPENROWSET (BULK 'C:\Private\JSON_data.json', SINGLE_CLOB) AS x

CROSS APPLY OPENJSON(BulkColumn);

*You can download the demo JSON file used in this example here: JSON_data.json (162.00 bytes)

Indexing JSON data

Since JSON can be stored in the database using NVARCHAR(MAX) data type or VARBINARY(MAX) using COMPRESS function it’s easy to understand that storing such data is not so optimized and maybe you should avoid it. Nevertheless, if you need to store data in JSON format in the database there is a slightly chance that you also have the need to search through the data. You want the search to be fast and optimized? Ok, let’s see what the possibilities are to accomplish this task. First, we will create a test table and fill it with some test data.

Example:

USE WideWorldImporters;

GO

DROP TABLE IF EXISTS dbo.JSONIndexing;

GO

CREATE TABLE dbo.JSONIndexing(

[CustomerID] INT NOT NULL,

[CustomerData] NVARCHAR(2000) NULL,

CONSTRAINT PK_JSONIndexing PRIMARY KEY CLUSTERED([CustomerID])

);

GO

INSERT INTO dbo.JSONIndexing ([CustomerID], [CustomerData])

SELECT

[CustomerID]

, ( SELECT

C1.[CustomerName] AS Name

, PC1.FullName AS PrimaryContact

, C1.PhoneNumber AS 'Contact.Phone'

, C1.FaxNumber AS 'Contact.Fax'

, C1.WebsiteURL

FROM

[Sales].[Customers] C1

LEFT JOIN [Application].[People] PC1 ON C1.PrimaryContactPersonID = PC1.PersonID

WHERE

C1.CustomerID = C.CustomerID FOR JSON PATH, WITHOUT_ARRAY_WRAPPER)

FROM [Sales].[Customers] C

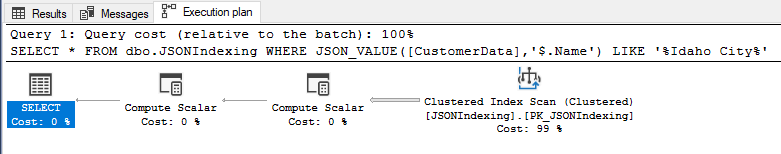

We will try to select Idaho City customers with no indexes and see how the execution plan looks.

Example:

SELECT * FROM dbo.JSONIndexing WHERE JSON_VALUE([CustomerData],'$.Name') LIKE '%Idaho City%';

We can see a clustered index scan. Also, I turned on the query statistics and I can see that the query took 35 logical reads and used 16ms of CPU time. The test table has only 633 rows and a total size of 0.258 MB, so it is a small sample data and my conclusion is that it is performing slow.

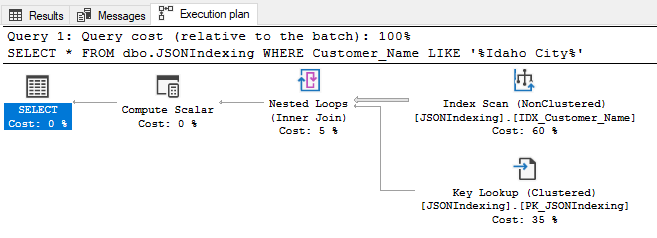

Computed column with index

First possibility to solve the issue could be to create and index on a computed column. This should help when there is one JSON property that could be a nice indexing candidate. In this case I want to create an index on the customer name (node “name”).

Example:

ALTER TABLE dbo.JSONIndexing ADD Customer_Name AS JSON_VALUE([CustomerData], '$.Name');

CREATE INDEX IDX_Customer_Name ON dbo.JSONIndexing(Customer_Name);

Let’s see how the execution plan looks now.

The newly created index was used. The execution plan looks better, the query took 9 logical read and used <1ms of CPU time. Somehow better and you can try by yourself on larger data sets for better conclusions.

We will drop the new index and the column for the next example.

Example:

DROP INDEX [IDX_Customer_Name] ON [dbo].[JSONIndexing]

ALTER TABLE [dbo].[JSONIndexing] DROP COLUMN Customer_Name

Full-text index

If the case is that we want to search through the whole JSON document because we can’t identify some suitable nodes for indexing like in the previous example, we can speed up things creating u full-text index on a column containing JSON data.

Example:

CREATE FULLTEXT CATALOG FullTextCatalog AS DEFAULT;

CREATE FULLTEXT INDEX ON [dbo].[JSONIndexing]([CustomerData]) KEY INDEX PK_JSONIndexing ON FullTextCatalog;

GO

/* Wait for the index to be populated */

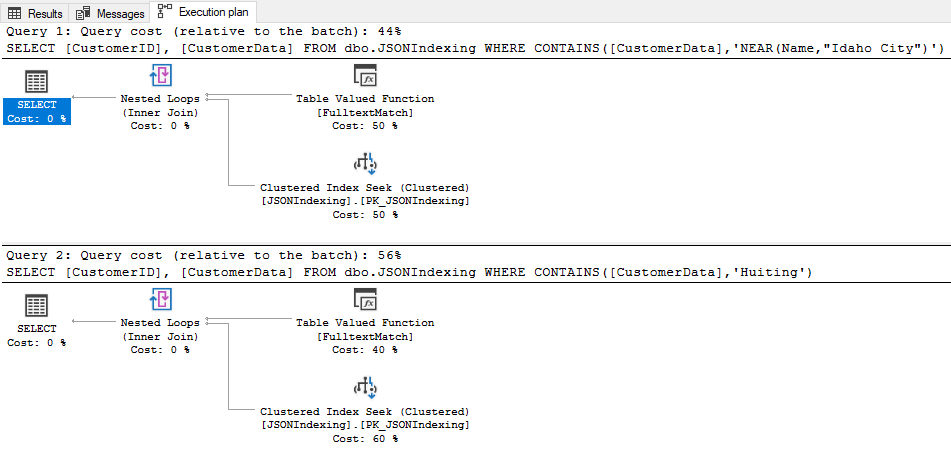

SELECT [CustomerID], [CustomerData] FROM dbo.JSONIndexing WHERE CONTAINS([CustomerData],'NEAR(Name,"Idaho City")');

SELECT [CustomerID], [CustomerData] FROM dbo.JSONIndexing WHERE CONTAINS([CustomerData],'Huiting');

In the execution plan we can see that both queries used the full-text index. Both queries used <1ms of CPU times. Like before, it is not an ideal approach but, in some cases, maybe you are forced to use it.

At the end, let’s drop our indexes and the test table.

Example:

DROP TABLE dbo.JSONIndexing;

DROP FULLTEXT CATALOG FullTextCatalog;

Compare two table rows using JSON

In this example I will show you a cool usage of JSON in MS SQL Server.

Imagine you have the need to compare two rows column by column in a table and to see differences value by value. It is an easy task if you have two or three columns to compare, but if we are talking about twenty even hundreds of columns the problem is more complex since you should compare column by column in the WHERE part of the statement etc.

For the purpose I will create a sample table and we will imagine that it is needed for some configuration settings. I will insert two rows that obviously will be different. Of course, you can use this technique on two different tables or even databases but to keep it simple we will test it on two rows of the same table.

Example:

USE WideWorldImporters;

GO

DROP TABLE IF EXISTS dbo.TestCompareRecords;

GO

CREATE TABLE dbo.TestCompareRecords(

[Key] INT NOT NULL,

App_Settings NVARCHAR(MAX) NULL,

App_ID INT NOT NULL,

App_Version NVARCHAR(256) NOT NULL,

Active BIT NOT NULL,

AdditionalData NVARCHAR(MAX) NULL

);

GO

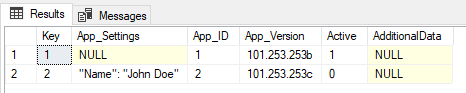

INSERT INTO dbo.TestCompareRecords ([Key], App_Settings, App_ID, App_Version, Active, AdditionalData) VALUES (1, NULL, 1, '101.253.253b', 1, NULL);

INSERT INTO dbo.TestCompareRecords ([Key], App_Settings, App_ID, App_Version, Active, AdditionalData) VALUES (2, N'"Name": "John Doe"', 2, '101.253.253c', 0, NULL);

GO

SELECT * FROM dbo.TestCompareRecords

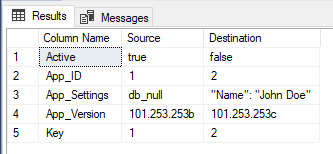

Let’s now write the query to compare the two rows column by column. We will convert the two rows to JSON using FOR JSON AUTO, INCLUDE_NULL_VALUES, WITHOUT_ARRAY_WRAPPER, then use the OPENJSON with the default schema and join the resulting tables by the “key” column and display side by side the “value” column.

Example:

SELECT

A.[key] AS "Column Name"

, ISNULL(A.[value], 'db_null') AS Source

, ISNULL(B.[value], 'db_null') AS Destination

FROM

OPENJSON (

( SELECT * FROM dbo.TestCompareRecords WHERE [Key] = 1 FOR JSON AUTO, INCLUDE_NULL_VALUES, WITHOUT_ARRAY_WRAPPER)) A

INNER JOIN OPENJSON((SELECT * FROM dbo.TestCompareRecords WHERE [Key] = 2 FOR JSON AUTO, INCLUDE_NULL_VALUES, WITHOUT_ARRAY_WRAPPER)

) B

ON A.[key] = B.[key] AND ISNULL(A.[value], 'db_null') <> ISNULL(B.[value], 'db_null')

And that’s all folks 😊 Just a final cleanup and we are finished.

Example:

DROP TABLE dbo.TestCompareRecords;

*MS SQL Server sample database "WideWorldImporters" can be downloaded at: https://github.com/Microsoft/sql-server-samples/releases/tag/wide-world-importers-v1.0

*You can download the complete SQL Script with all examples from the post here: SQL Script.sql (6.82 kb)